College student put on academic probation for using Grammarly: ‘AI violation’::Marley Stevens, a junior at the University of North Georgia, says she was wrongly accused of cheating.

Simple solution. Ask the student to talk about their paper. If they know the subject matter, the point of the assignment is meant.

This is the right answer. No tool can detect AI generated content with zero false positives, but someone using AI to cheat won’t actually know the subject matter.

That’s great for some people, but would be absolutely horrible for people like me. I usually know the subject matter, but I tend to have problems gettingy thoughts out of my head. So I’d just end up getting double screwed if I were in this situation.

I’m reminded of the lecturer who was accused of being an AI when they sent an email.

Getting the triple-whammy of being accused of using an AI when you didn’t, drawing a blank during an oral interview/explanation, and then being penalised like you’d used one anyway, would be hellish.

Yes, which is why I hate job interviews and especially people pretending to be good as interviewers and telling stories how somebody didn’t know something elementary. Well, maybe if it’s elementary, then the applicant did know that, just your questions confuse people, which makes it mostly your fault (that’s not directed to anybody present).

Same. The anxiety kicks in and everything you ever knew leaves your brain in the span of half a second and doesn’t come back until the other person is free and clear of your presence.

I had to do a lot of presenting in college, which is more or less the same thing. There were peers who struggled with that, but they always talked with the Professors and I never came across a hard ass that would penalize them for it. Might not even be legal if it’s a medical condition.

turn it in is a fucking content farm anyway. you sign over your rights to them. we should insist schools stop using it.

You sign over rights to your works when you turn them in for grades anyway. The school can do whatever they want with your papers.

Which is such a fucking scam. You’re paying the school so the school has rights to your shit somehow?

My friend put his own Masters Thesis on libgen because fuck that absolute horseshit.

Based friend

Do you really? As in if you do a project and submit it it is then the property of the school? For instance if you wrote a program or did a research project, the school would have rights to sell it and not you? I had never heard that before.

If you’re genuinely asking, yes that is generally how it works.

That’s wack

When you’re a research assistant, a professor also gets all credit for your work, even if all they did was read the abstract.

I’ve been at the front of the classroom–using tools like TurnItIn is fine for getting “red flags,” but I’d never rely on just tools to give someone a zero.

First, unless you’re in a class with a hundred people, the professor would have a general idea as to whether you’re putting in effort–are they attentive? Do they ask questions? And an informal talk with the person would likely determine how well they understand the content in the paper. Even for people who can’t articulate well, there are questions you can ask that will give you a good feel for whether they wrote it.

I’ve caught cheaters several times, it’s not that hard. Will a few slide through? Yes, but they will regardless of how many stupid AI tools you use. Give the students the benefit of the doubt and put in some effort, lazy profs.

My sister once got a zero because of a 100% match in the system with her own same work uploaded there a few minutes before. It was resolved, but - not very nice emotions.

I’d have put a complaint in with the department for unprofessional conduct . If they can’t catch something that obvious, they aren’t even trying to run a class properly.

Well, I told her something similar, but apparently everybody involved was immediately apologetic, so.

Same thing happened to me at the beginning of this semester. Thankfully it got resolved, but it’s not a good feeling to be accused of 100% plagiarism.

Same, and discussed with my lecturers.

Especially 1st year business - we use the same text book as the last 10 years (just different versions), where nothing has really changed in the last 30 odd years, using the same template that runs through 600 odd students a year, where nearly every student uses the same easy three references that we used in class.

Its new to you, but no one is going to have an original idea or anything revolutionary in that assessment.

Anyone marking an assignment with a TurnItIn report, who is also in possession of half a brain, knows to read through the report and check where the matches are coming from. A high similarity score can come about for many reasons, and in my experience most of those reasons are not due to cheating.

I’ve also been the one on the opposite side of the classroom. I was lab based, so we didn’t use Turn it in.

With a reasonably sized class, you can easily spot which students have worked together because their reports tend to be shockingly similar.

I agree that you get a feel for them with informal conversations and you can see how their submissions tie up with your informal conversations.

I used to tweak the questions year on year. I’ve suspected there is a black market, an assignment exchange, or something because I caught students submitting work from previous years. They were mainly international students that were only there for their masters year.

A professor once accused me of cheating because he mixed up my project with another students, marked that students project twice, and assumed i copied them… Acedemia is not always the place of enlightenment people imagine…

deleted by creator

Yes but it’s been quite a while since it was. Now it’s a heinous cash grab that puts young people, that don’t understand basic finance, into lifelong debt. Long ago a tool like this would’ve probably been adopted by academia as a tool you need to learn to leverage on order to get to a better, more thorough, understanding of a subject. We’ve capitalismed education and it’s hurting everyone.

Here where I live using AI detection tools is not allowed because they are not 100% correct, which means they might flag an innocent student.

“It is better that one hundred innocent college students fail a class than that one guilty college student write a paper with AI.” - Benjamin Academic

Something my instructors could never explain to me is what Turnitin does with the content of papers after they’re scanned. How long are they kept? Are they used for verifying anyone else’s work? I didn’t consent to any of that. When someone runs for office 20 years later are they going to leak old papers? Are they selling that data to other AI trainers? That’s some fucking bullshit. It needs to be out of the classroom for more reasons than just false positives.

It gets added to their database forever as far as I know. Unsure if they’re selling it but based on the trajectory of capitalism yes they’re selling the fuck out of to anyone who will buy.

I remember seeing some fine print when signing agreements for my college that any papers I write are intellectual property of the school. I’m guessing that’s standard nowadays.

College: You will pay is 30k a year and all your base belong to us!

Not shocked that this comes from TurnItIn. Has always been a garbage service in my experience. Only useful for flagging quotes, citations, class/insturctor names, and my own name as plagiarism.

I saw it flagging “the […]. I am […]” it didn’t even care about the words in between, just decided to highlight the most common words in English in that one paragraph out of spite I guess.

It also once flagged my page numbering lmao, like I’m sorry I didn’t know I had to come up with a new and exciting numeric system for every essay I submit

Don’t forget the table of contents and page headings like “analysis” or “recommendations”.

Garbage software.

So the teacher uses an unreliable AI tool to do his job, to teach a student a lesson about allegedly using an AI tool to do her work, and the only evidence he has is “this proprietary block box language model says you plagiarized this assignment”. No actual plagerism to cite, just a computer generated response arbitrarily making accusations. What’s the lesson here? AI models are so unreliable, when we use them we punish you for things you didn’t do, so don’t you dare use them for schoolwork?

It has a 1% false positive rate. If you have students turn in 20 assignments each semester, 1 in 5 students will get disciplined for plagiarism they didn’t commit. All because a teacher was too lazy to do his job without blindly accepting the results of an AI tool, while pretending that they are against such things as a matter of academic integrity…

I remember when grammar and spellcheck tools became available, it was hilarious running well-known texts through them and accepting all the changes.

this is ridiculous. spell and grammar check tools != AI content generators or plagiarism.

edid: apparently grammarly has changed a lot since i last used it.

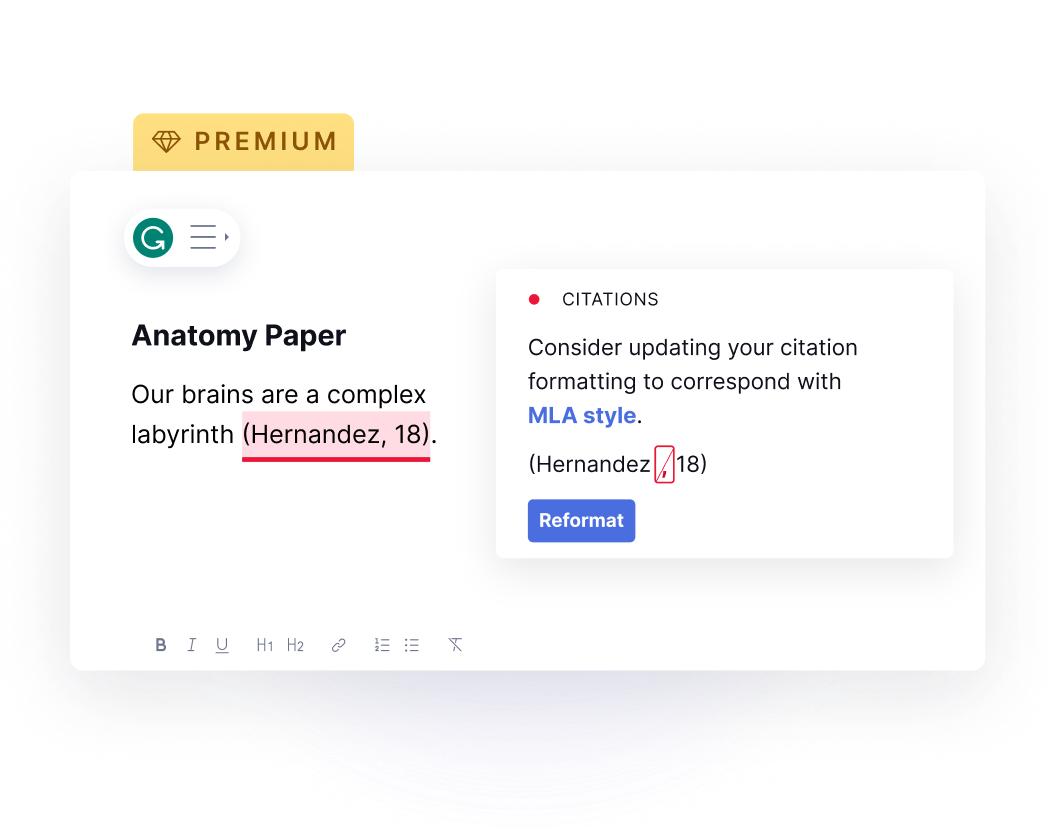

Grammarly is a lot more than a spell checker. Here are some screenshots from their marketing page that specifically recommends using their product as a student.

aha, grammarly has changed a lot since i last used it.

I feel the need to point out, this is exactly the same type of feedback you’d get from a competent proofreader.

But you still need to put the content in there. All it does is do the boring formatting stuff. The real crime is not teaching students latex.

Ehhh…

They integrated GPT last year. It’s entirely possible to use Grammarly in a way that raises academic integrity concerns nowadays.

Thats still a bullshit vague intro. Like you still need to feed it what you are introducing and ideally how you want to get there. Again. This depends if this is an English writing class or anything else. Cause the point of the essay is to convey the point, knowing you need an intro is the key point, writing something to get into your meat is 40% of the boring bullshit you need to write in a report, the other 40% is the conclusion and formatting. Using AI to streamline that is not cheating unless its an English writing class. these are tools you use to convey your point better. You need a point to begin with.

This is like saying calculators are gonna make math homework easier. Make better homework!

and its not like these AI detection tools arent snakeoil either.

Using AI to streamline that is not cheating unless it’s an English writing class.

Using AI to streamline that is cheating if and only if the course syllabus defines it as cheating.

Also, I hate to break it to you, but somewhere between “many” and “most” college classes are writing classes in disguise, depending on your major. The ability to write well is massively important, and generative AI is prohibited for the same reason that teachers try to make sure you understand arithmetic before letting you use a calculator. The key difference is that writing is subjective and way more complex, so the best teachers can aim for is continuous improvement.

I say all this as a college student who uses AI nearly every day. A good chunk of my peers absolutely misuse it.

and its not like these AI detection tools arent snakeoil either.

Indeed, they are.

My point is this tool exist and you cant for certain say that someone is using this tool. If you want to give someone a real education find a way to make sure they learn despite that. If you end up using AI for a nuanced essay its not going to answer that properly and a teacher would grade that as sub par work. Good work with the AI would be to act as an editor and determining if whats said is accurate and if it should be in your paper. Bad work with the AI would be to not be an editor. There is still a job the students has to do and learn.

I say this as someone who grades work handed in by students.

I totally agree, there is rarely any way to tell if (and more importantly, to what extent) student-submitted writing is AI generated. We’re probably also pretty close to AI being able to generate outstanding work while mimicking your own writing style. For this reason, in my mind, the era of take-home writing assignments is coming to a close.

I’m actually okay with this, as it will hopefully force teachers to be more creative with and involved in the learning process. One of my biggest takeaways from 12 years of grade school was that homework trends over the last few decades are patently absurd, fueled in large part by lazy teaching. I see AI as a chance to finally correct that trend.

A calculator (in most cases) can’t just do a problem for you, and when it can those calculators are banned (the reason you can’t use ti84s on gen chem exams in college, or and 89x in a calc 1 class). Such a tool means that you really don’t have to understand to to get the answer. To me your comment reads that if I get the answer to a problem by typing it into wolfram alpha it’s the same as working through the problem on your own, as long as you understand how WA got there. I wholeheartedly disagree that somebody that is using wolfram alpha to get all of their answers actually knows jack shit about math, kinda like how anybody using generative AI for writing doesn’t have to know jack shit about the subject and just give a semi-specific prompt based on a small amount of prior research. It’s very easy for me to type into a GPT bot "write a paper on the social and political factors that led to the haitian revolution. It’s a completely different experience to sift through documents and actually learn what happened then write about that. I’m fairly confident I could “write” a solid paper using AI without doing almost any research if it’s a topic I know literally anything about. Eg: I don’t know very much about the physics of cars but I can definitely get generative AI to give you a decent paper on how and why increases in engine size can lead to an increase in efficiency just by knowing that fact to be true and proofreading the mess the AI throws together for me. The fact that you consider these tools the same as a calculator (which I might add that we still often restrict the use of, eg. no wolfram alpha on your multivariable final) is astounding to me tbh.

My point is the tool is out there and you cant definitively prove that someone used AI. So we better figure out how to use it and test with the assumption that someone’s using it. chatgpt is a fucking inaccurate mess. If you as a professor cant catch up with that youre using not doing your job. and using these AI detection tools is stupid and doesnt fix the problem. So what do we do now?

I agree, but also grammarly did get into the ai market. https://www.grammarly.com/ai

oh, really…

What an awful website

I feel like this article was mostly interested in just showing her pictures. 🙄

nypost-- yeah i believe it

She can just show them the version history in Google Docs.

God damnit Kevin quit trying to extort the sexy college girls

My masters program told us to use AI all we wanted but just site the use.

Masters… writing all those citation pages… so what site did you use to generate those?

My uni has a specific section on using AI - reference it, confirm the points, if any sources aren’t cited its still on you.

Seems like the same sort of solution as my school had about Wikipedia which is by all means use it but site the sources not cite the content.

Quite a lot of AI will give you sources that you can check when they are referencing stuff, so just check those references to make sure it’s not made things up and then as long as it’s fine cite those websites and articles.

nice!

You may not agree with the policy or the tools used, but the rules were clear, and at this point she has no evidence that she did not use some other Generative AI tool. It’s just her word against another AI that is trained to detect generated material.

What is telling is her reaction to all of this, literally making a national news story because she was flagged as a cheater. I promise if she wasn’t white or attractive NY Post wouldn’t do anything. What a massive self own. Long after she leaves school this story will be the top hit on a google search of her name and she will out herself as a cheater.

You shouldn’t put too much stock in these detection tools. Not only do they not work, they flag non-native English speakers for cheating more than native speakers.

And flags anything you’ve previously submitted, including plans.

they flag non-native English speakers for cheating more than native speakers.

Yes, and for me as a former it’s absolutely clear why - because I’m doing the same thing as a generative model, imitating text in another language. Maybe with more practice in verbal communication and being more relaxed I could reduce this probability, but the thing is this is not something which should affect school tests at all.

These are people trying to use a specific kind of tools where it’s fundamentally not applicable.

What clear rule did she violate though? Like, Grammerly isn’t an AI tool. It’s a glorified spell check. And several of her previous professors had recommended it’s use.

What she did “wrong” was write something that TurnItIn decided to flag as AI generated, which it’s incredibly far from 100% accurate at.

Like, what should she have done differently?

You can’t create a relaiable AI to detect AI. Anyone that told you otherwise is selling you snakeoil.

i don’t believe she cheated, but i also don’t care.

i do think being a conventionally attractive blonde did help her get coverage.

i also want turn it in to die in a fire.

i’m very conflicted about your comment, but i’m not conflicted about this situation at all: stop using turn it in, and put the girl back in school.

i do think being a conventionally attractive blonde did help her get coverage.

No doubts, though conventions are a bit different where I live.

If that puts pressure on something systematic, that’d mean someone’s individual attractiveness being good for everybody.

How do you provide evidence you didn’t use something???

I can make an offline AI say absolutely anything in any way shape or form I would like. It is a tool that improves efficiency in those smart enough to use it. There is nothing about it that is different than what a human can write.

This is as stupid as all of the teachers that used to prevent us from using calculators for math 20 years ago. We should be encouraging everyone to adapt and adopt new technology that improves efficiency, and take on the real task of testing students with intelligent adaptive techniques. It is the antiquated mindset and academia that is the problem. Anyone that can’t adapt should be removed. When the student enters the workforce, their use of such efficiency improving tools is critical.

deleted by creator

deleted by creator

You need to sit down with an offline LLM and learn what they can actually do. It is not good at doing the work for you. It is excellent at helping you explore yourself in countless ways you can never access on your own. It can answer all of the questions you don’t quite understand as you try and navigate a new subject. It is easily able to amplify and accelerate the learning process. It can be abused like anything, but there is nothing new about that.

The articles and framing of AI as something bad is all coming from manipulation of the media by Altman and company. It is about trying to control the next tech monopoly that will dominate the next decade. It is already too late for that though. Open Source offline AI will beat what Open AI has tried to control. Yann LeCunn is the person to watch in this space. He is a Bell Labs alumni pushing open source AI as the head of Meta AI. If you know anything about the current digital age, that combination of someone from the old Bell Labs pushing open source to lead an industry without trying to monopolize it should mean a great deal.

AI is not really super capable like some kind of AGI. It is like Stack Overflow or old forum threads level helpful with complex tasks. It is also a mirror of both the datasets culture and person that creates the prompts. It is only as good as your vocabulary and ability to understand its idiosyncrasies while communicating on a level of openness that humans are not accustomed. This is an evolved tool. It is not AGI. It is not persistent. It can not learn on its own. There are very real limitations with how much information can be processed at once, and limitations for niche information. This is no time to be a Luddite. It is still an order of magnitude less capable than a human but offers access to tailored information on a level that has only been available to the super rich that hire tutors for their children any make major donations to institutions in the real “cheating” of the system you will never be able to object to.

I greatly value learning, so much so, that I jumped at the opportunity to have custom tailored learning the second I had the chance. It ended up being even better than I expected. There are scientific models and several ways to setup a model with your own documents where it can answer questions and cite sources.

… No proof she didn’t? What could possibly prove that?

Can you give me an example of this proof? And if so, is that something reasonable for a student to have?

Seriously, think it through.

If you write something in Word or an equivalent program, there will be metadata of the save files that shows creation and edit timestamps. If they use something like Google Docs, there’s a very similar mechanism via the version history. I actually had the metadata from a Word document be useful in a legal case.

Ok, and that’s proof of what exactly? That you made the file when you said you did?

Not to mention, you can set those to whatever value you want

I can see how it could be part of a court case, because it’s one more little corroborating detail. It doesn’t prove anything though

The edit history would show things like copy/pasting large blocks of text versus normally typed edits.

A quick search shows you can edit this as well… That is interesting though, I didn’t know it existed

Give me a couple hours and I could build something that makes pastes appear to be keystrokes. Give me a weekend, and I can build something mathematically indistinguishable from a human typing that will hold up to intense scrutiny

It still doesn’t prove anything, it’s just one more piece of circumstantial evidence. Still, it’s not unreasonable to paste the full text into it, or mix and match. Maybe you don’t have word installed on your computer - I don’t, I haven’t since I was in school myself. It’s reasonable to use word on school computers but do all of the work on an online text editor, then pasting into word on a school computer

You may not agree with the policy or the tools used, but the rules were clear,

OK, if you’ll be consistent and agree that using Taro cards to determine who’s cheating is normal, if rules say that.

and at this point she has no evidence that she did not use some other Generative AI tool

Your upbringing lacks in some key regards.

It’s just her word against another AI that is trained to detect generated material.

There are (or should be) allowances for the degree of precision where any tool can be trusted. If it is wrong in 1% of cases - then its use is unacceptable. In 0.1% - acceptable only if she doesn’t argue it. In 0.01% something - acceptable with some other good evidence.

I’ll help you become a bit less of an ape and inform you that an “AI” (or anything based on machine learning) can’t be used as a sole detector of anything at all.